UNI-1Less Artificial. More Intelligent.

Less Artificial. More Intelligent.

Uni-1 is a multimodal reasoning model that can generate pixels.

Built on Unified Intelligence, Uni-1 understands intention, responds to direction, and thinks with you.

Read technical report to learn more.

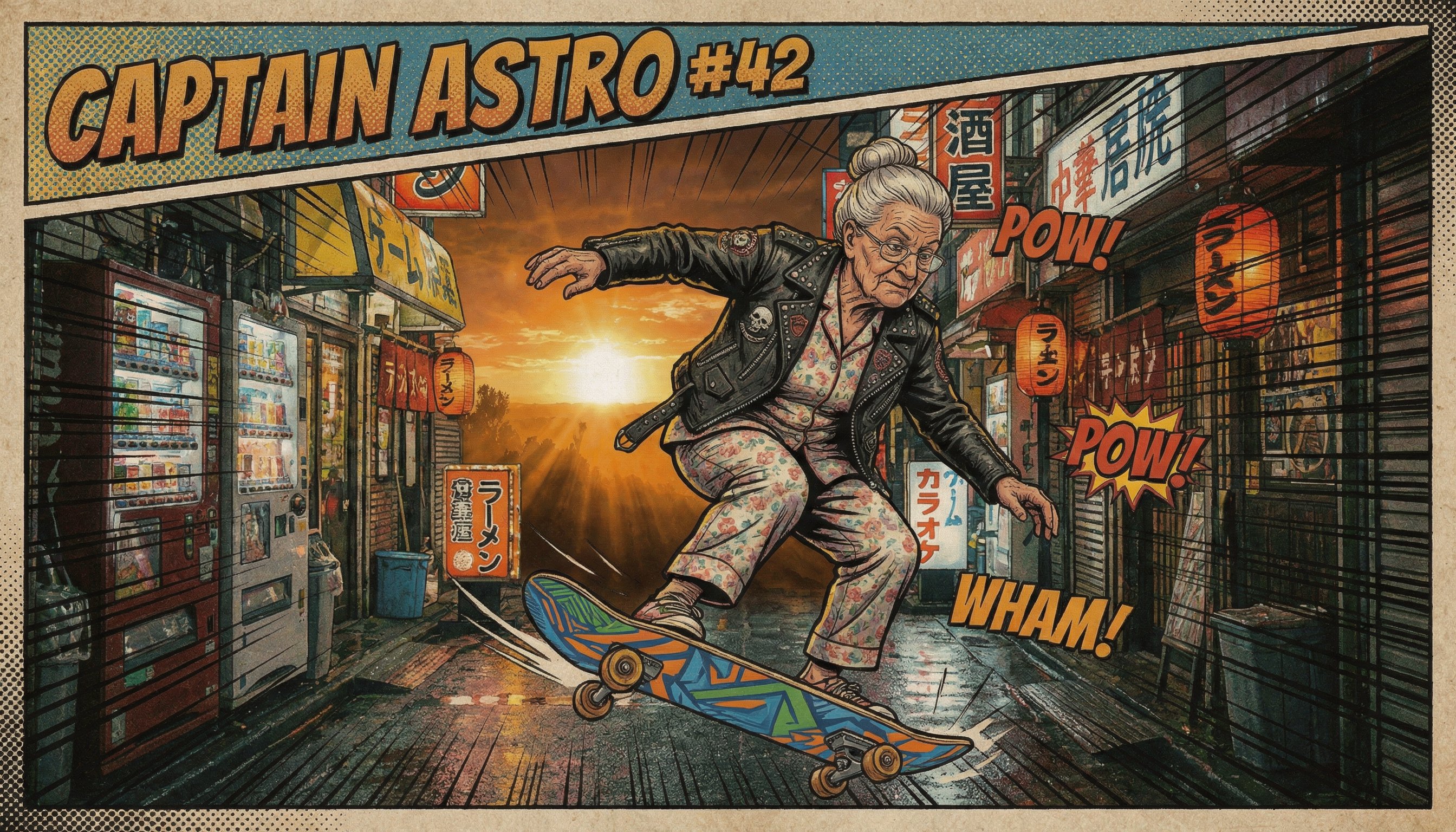

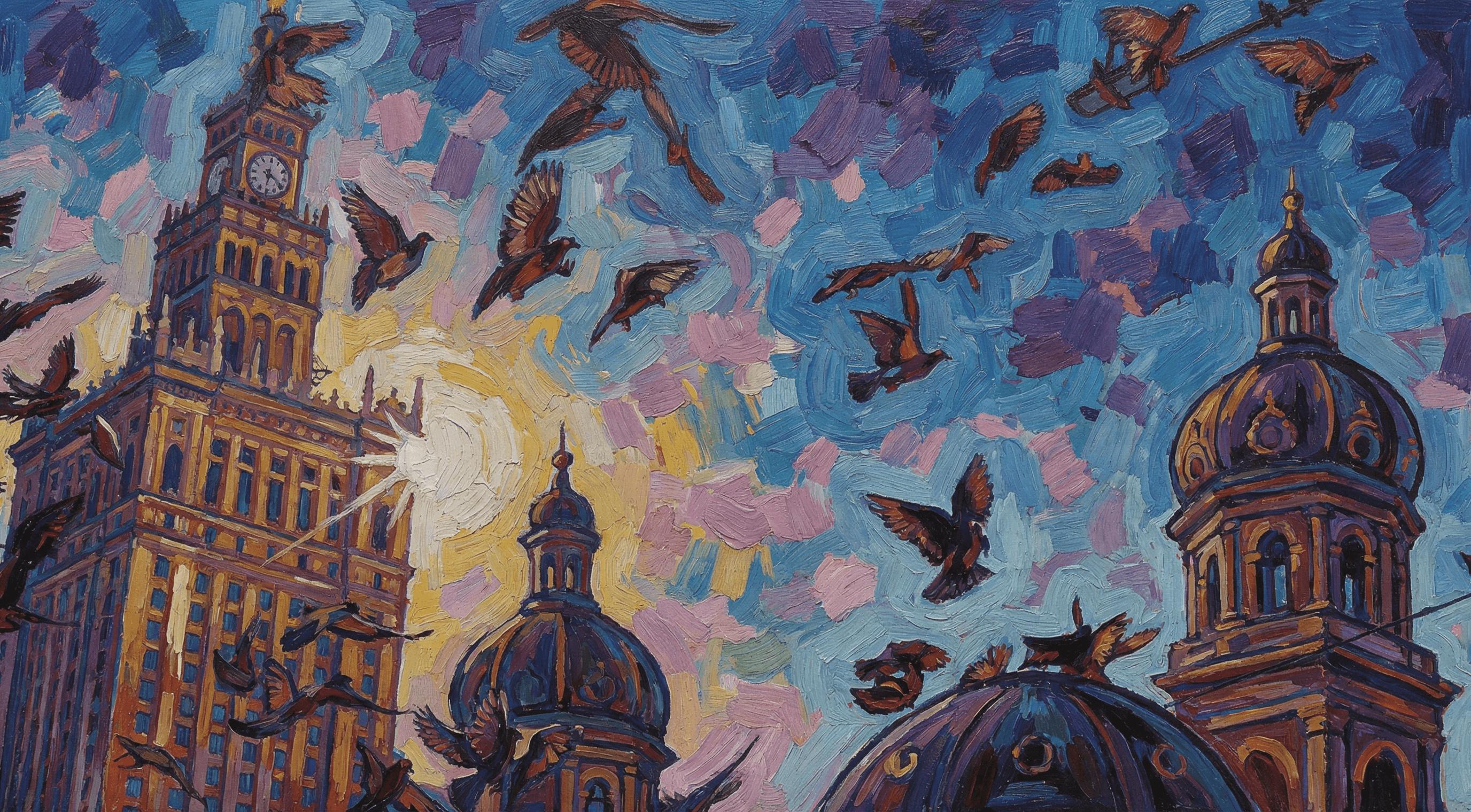

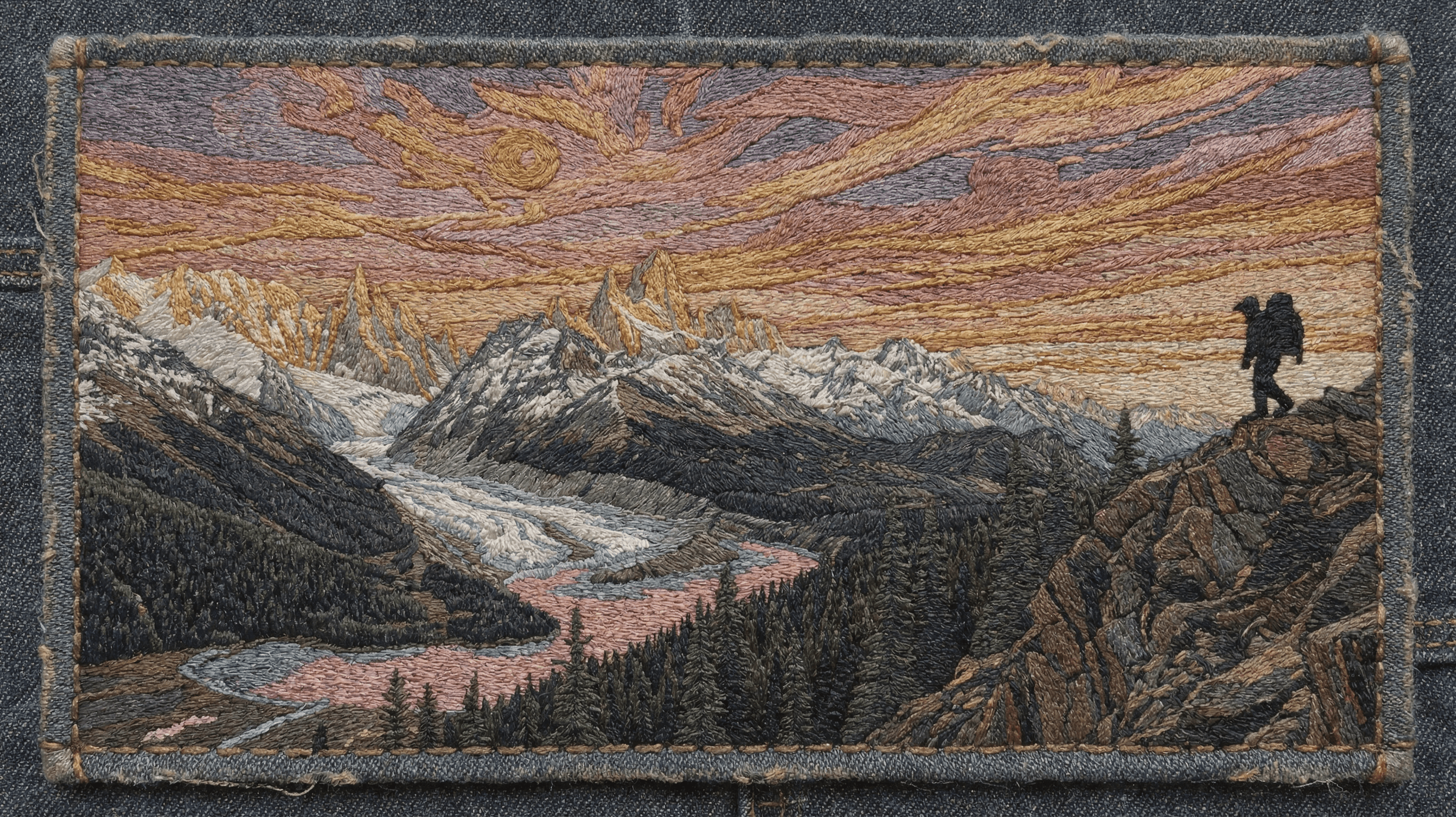

What Uni-1 can create

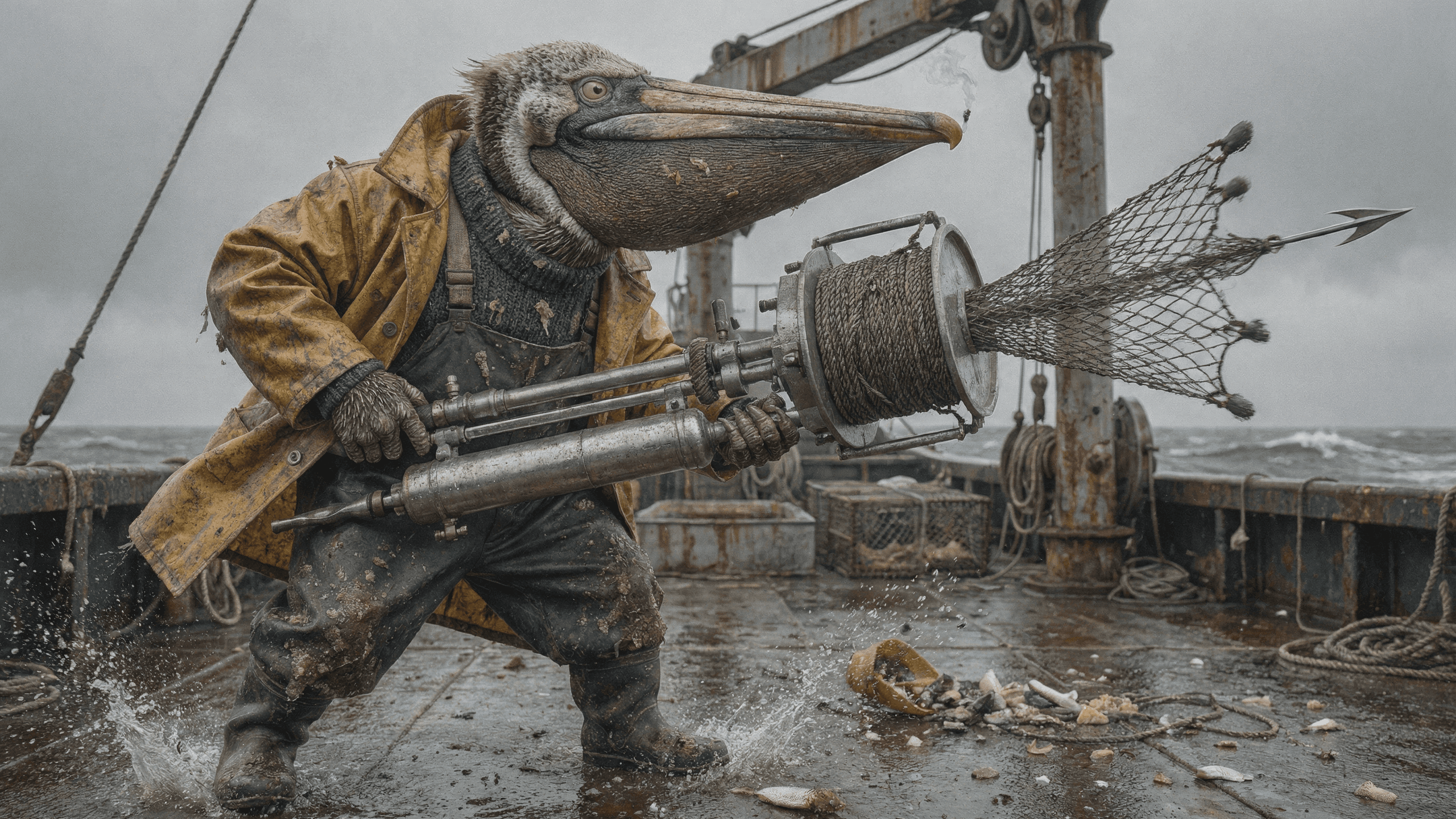

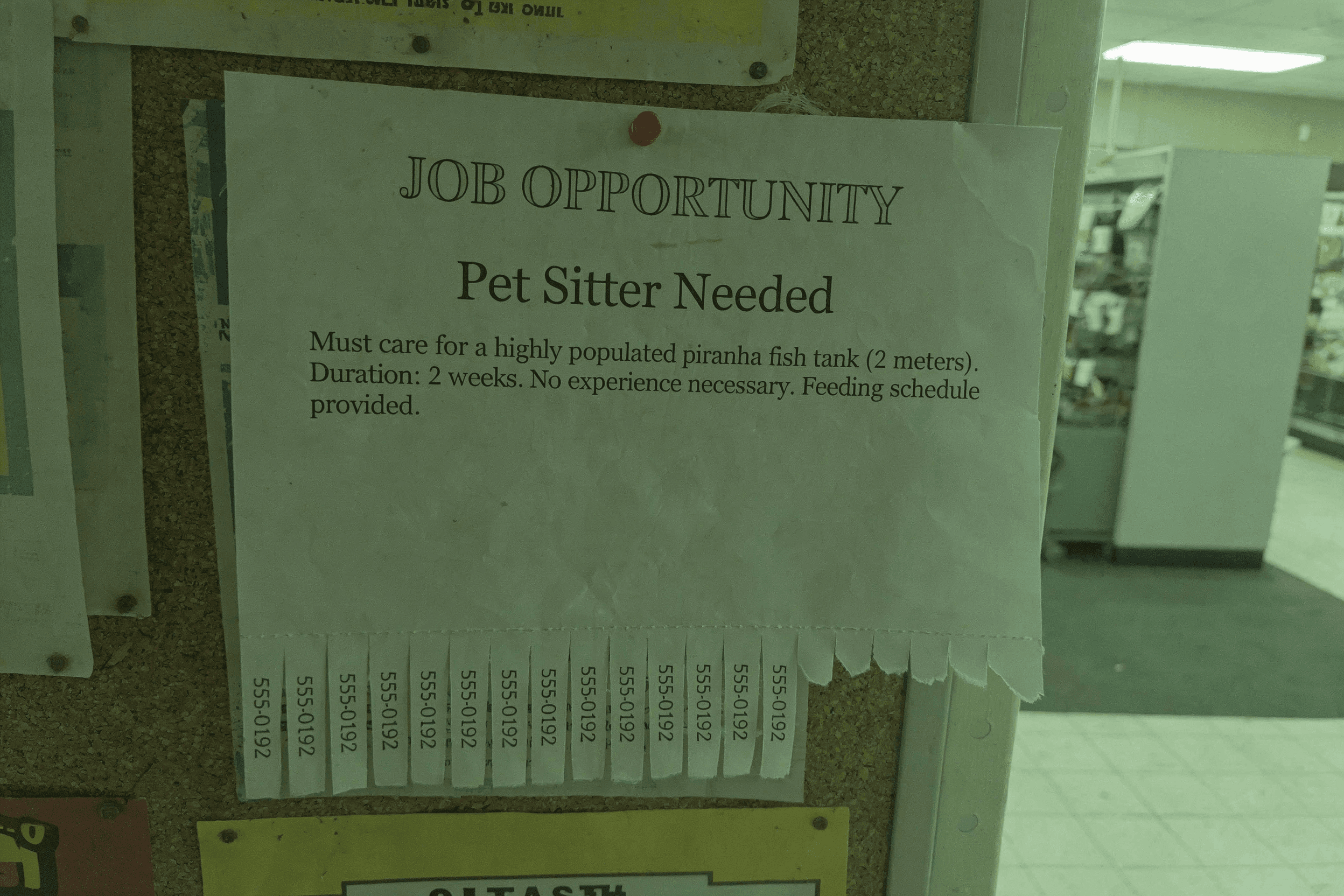

Intelligent

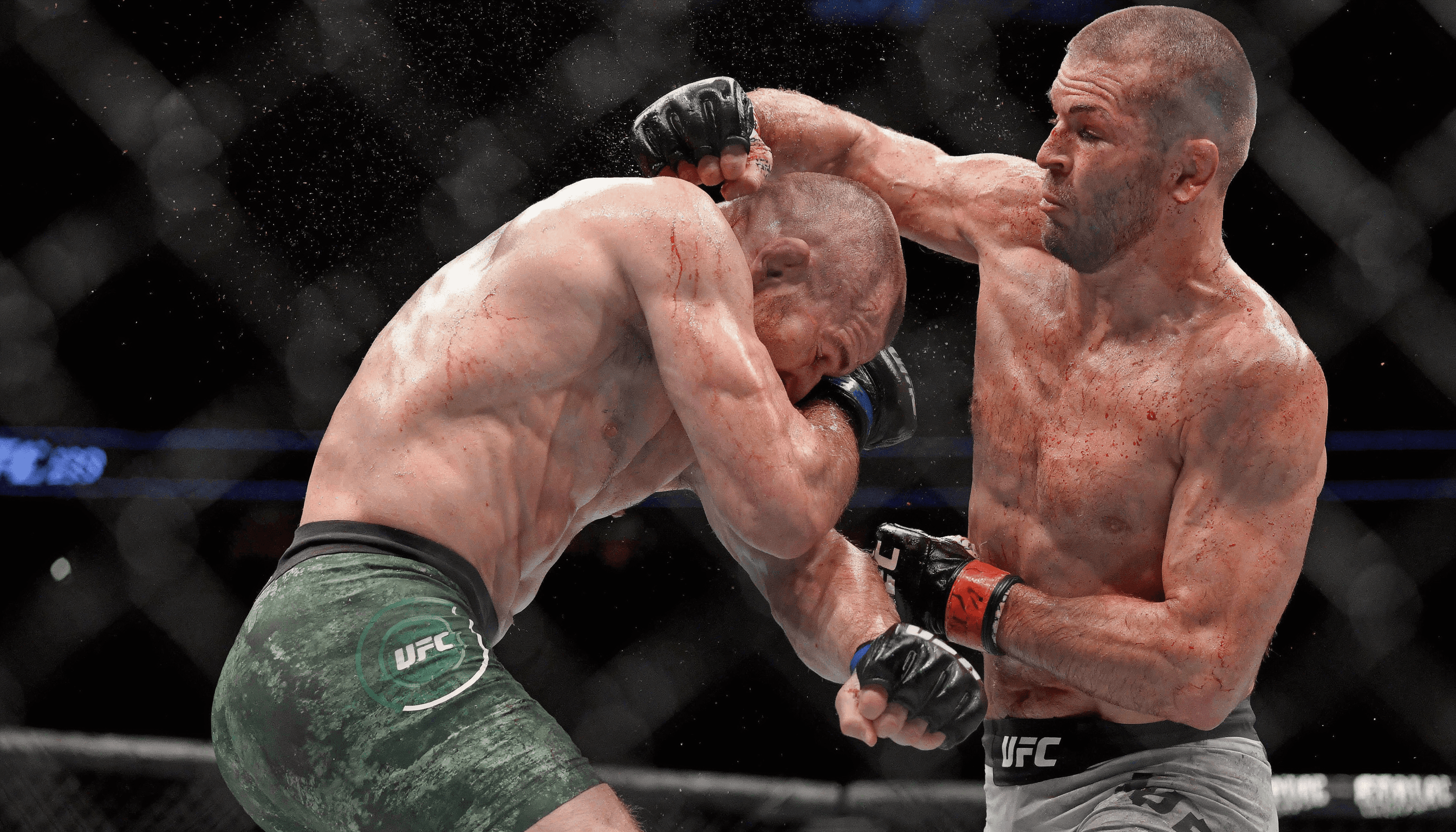

Common-sense scene completion, spatial reasoning, and plausibility-driven transformation.

Layers

Layers

More examples

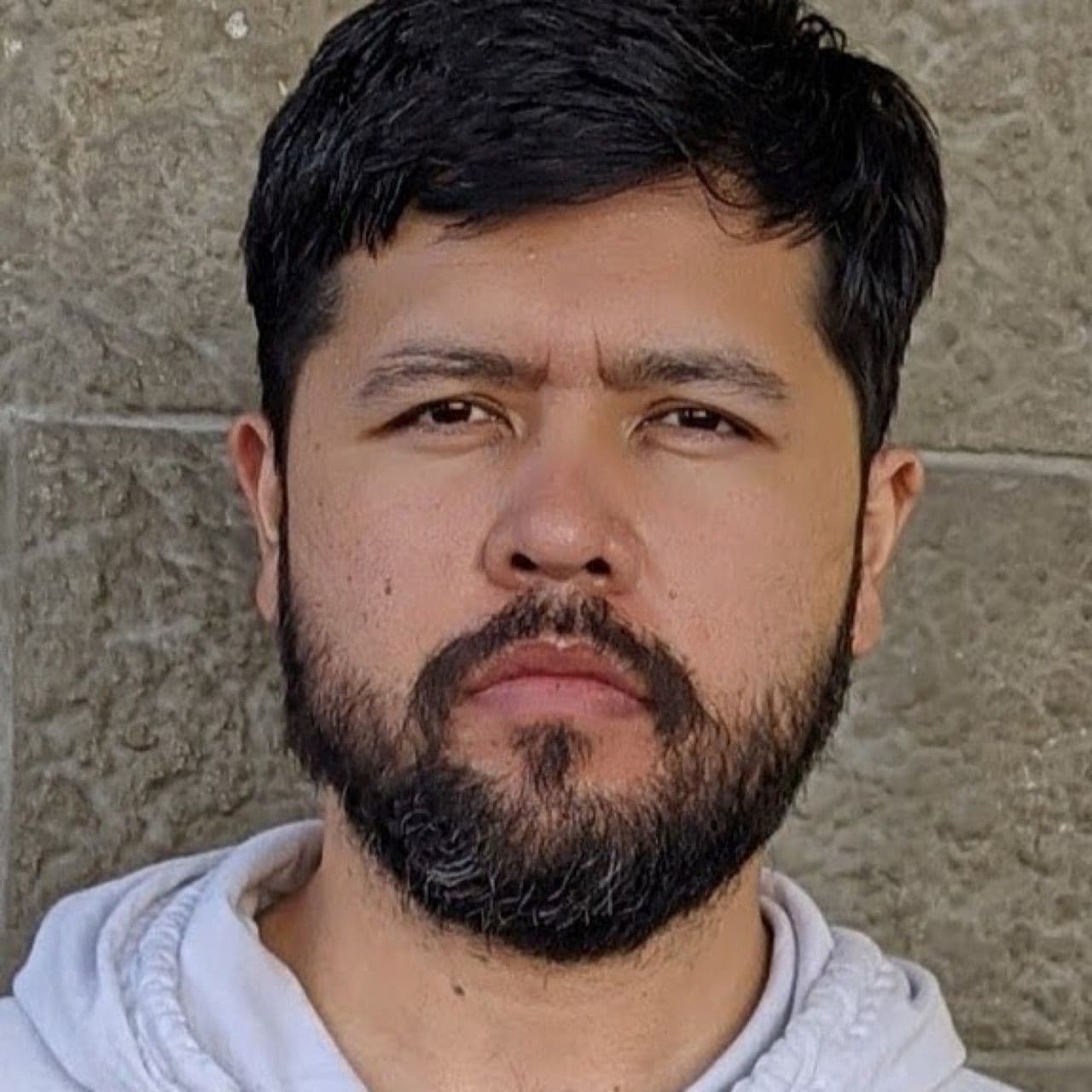

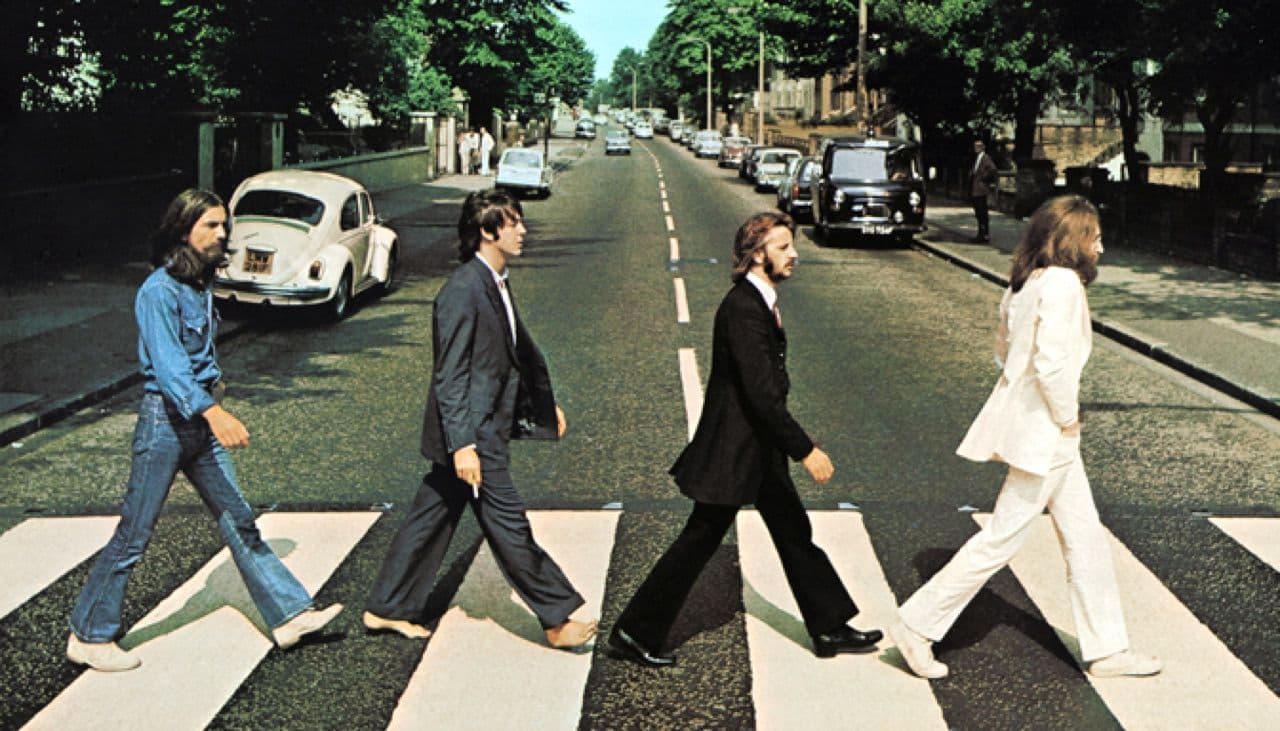

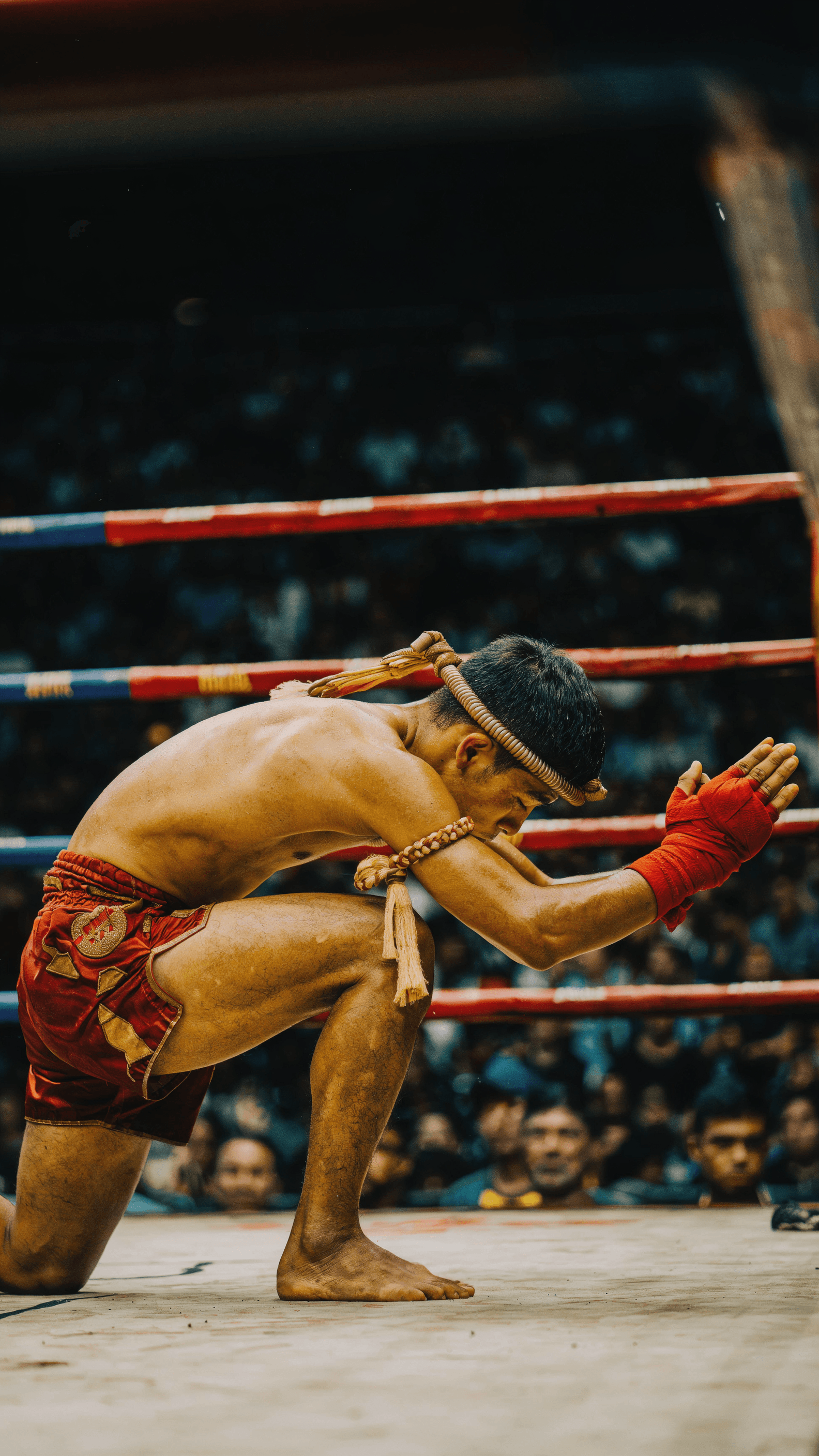

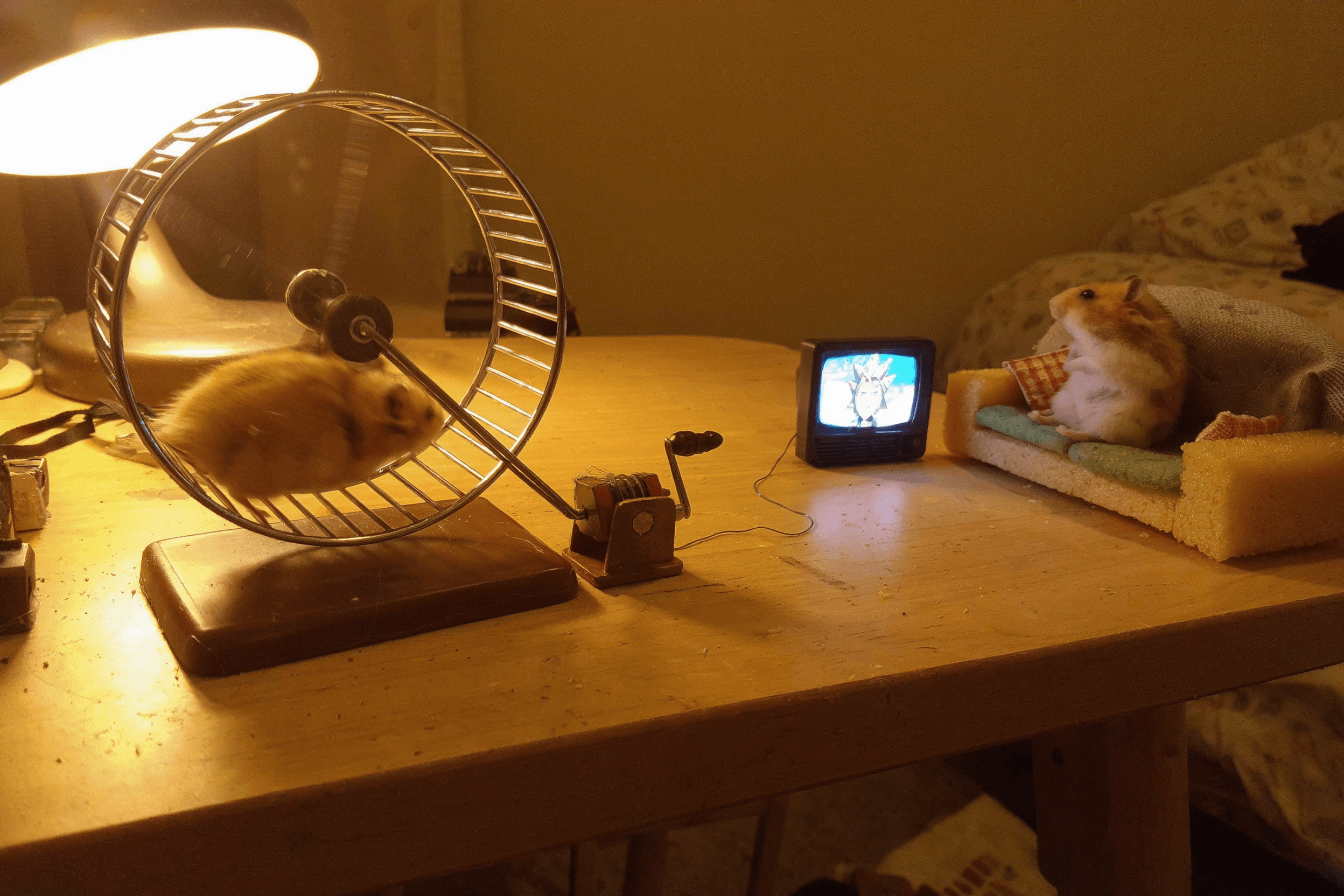

Directable

Reference-guided generation with source-grounded controls.

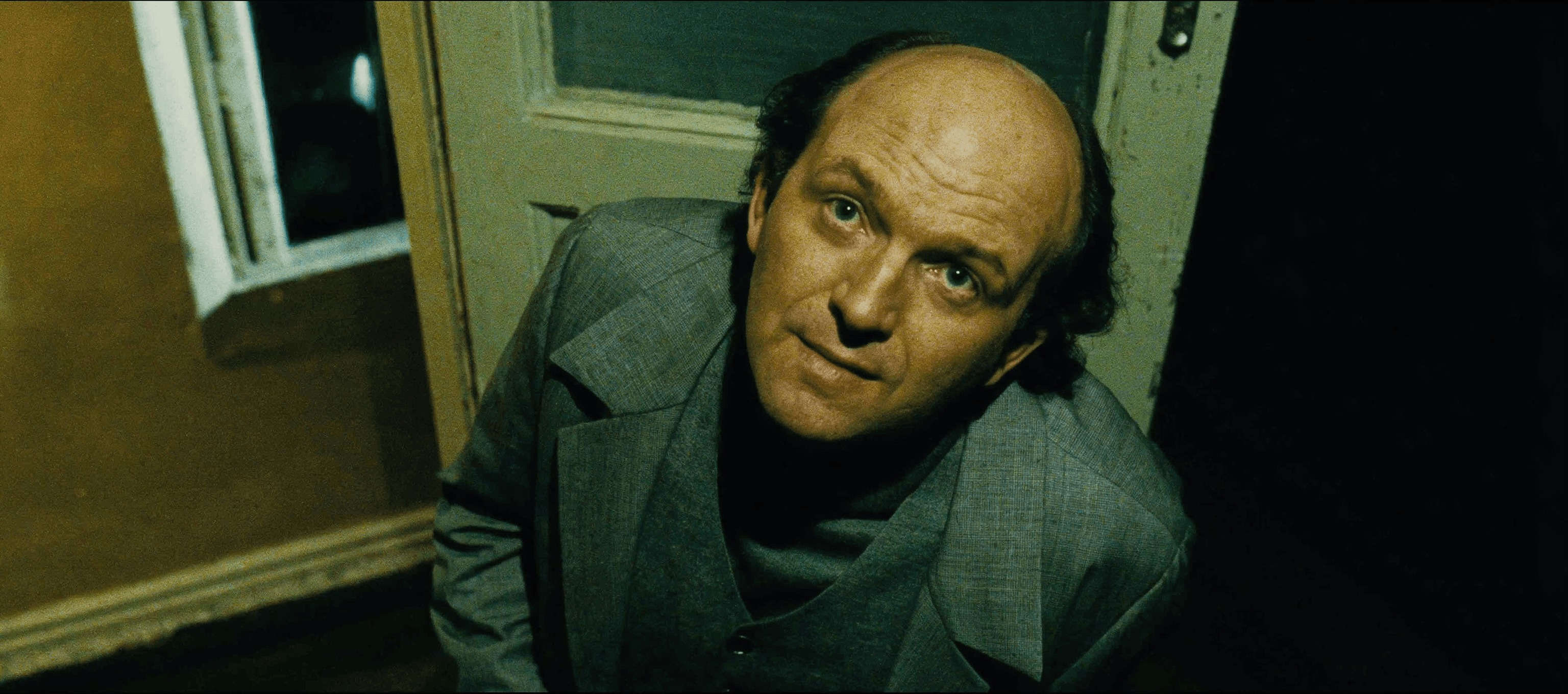

Input images

Input 1

Input 2

Input 3

Input 4

Input 5

Input 6

More examples

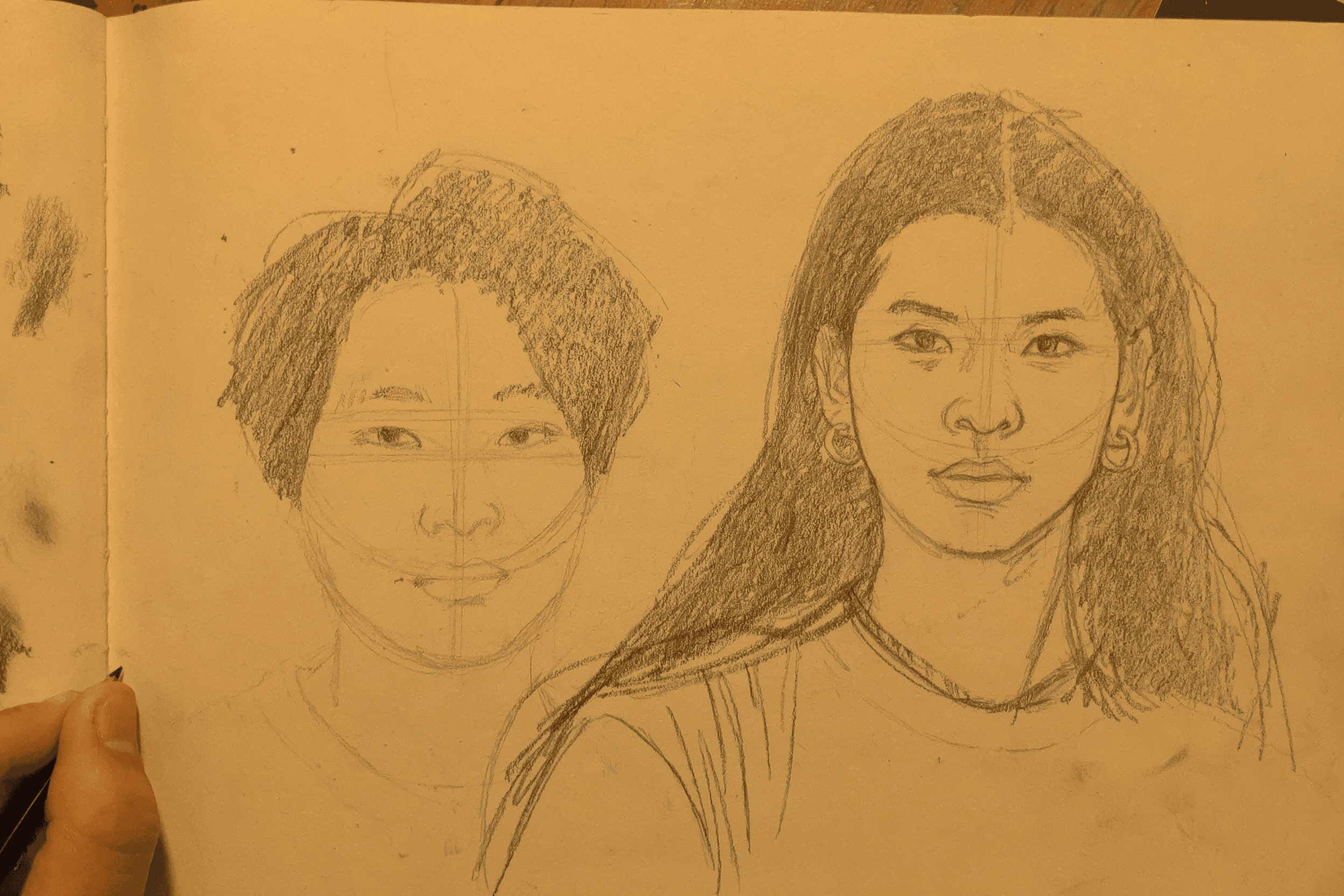

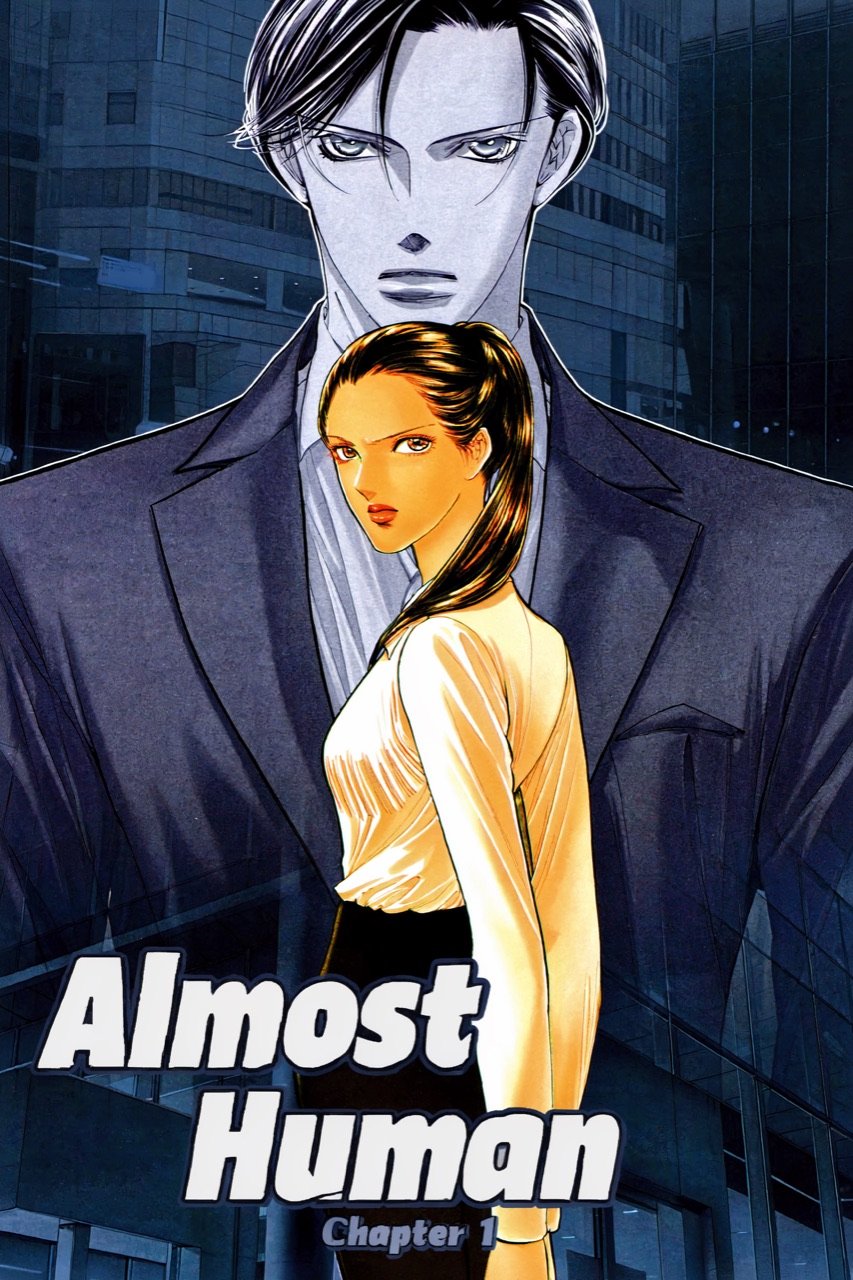

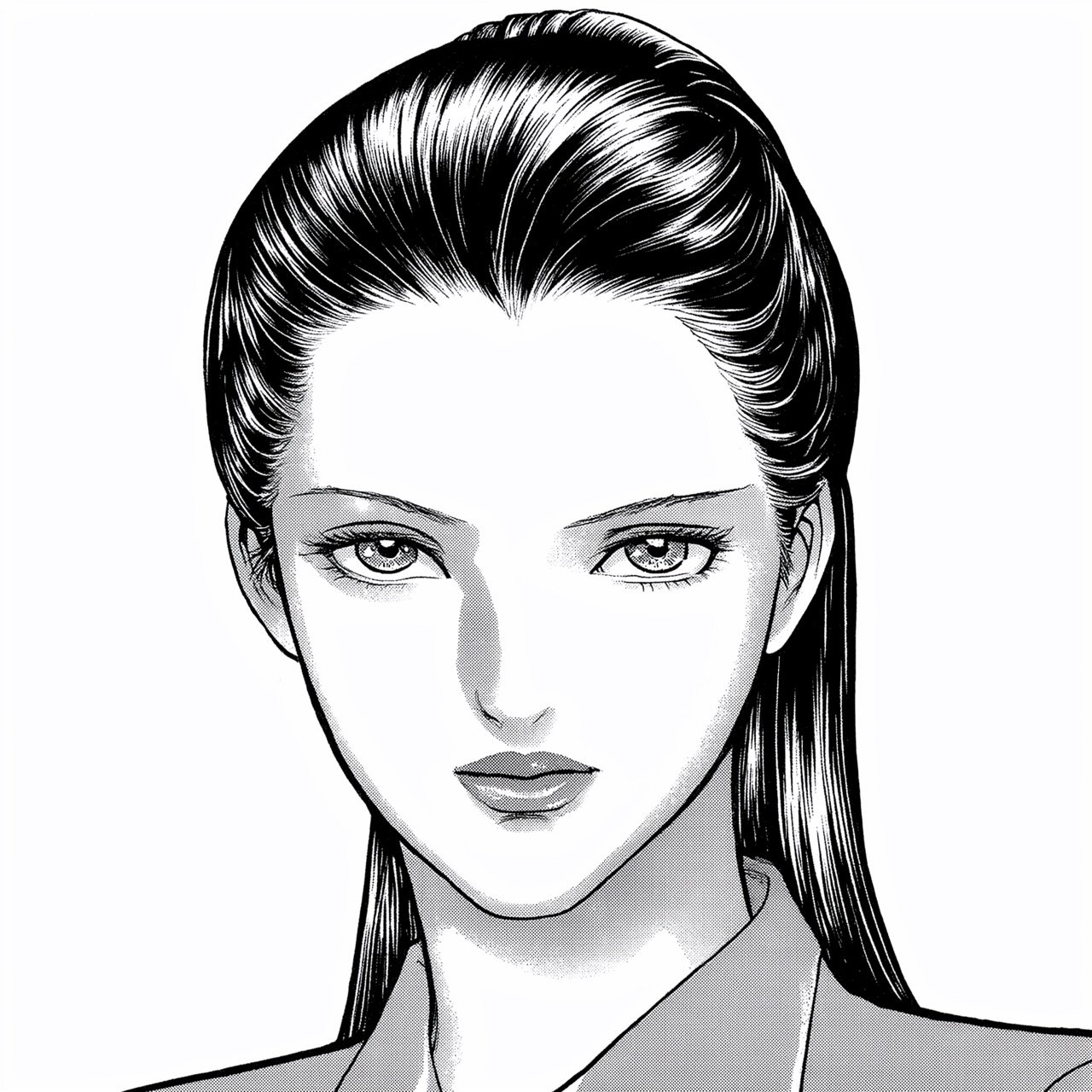

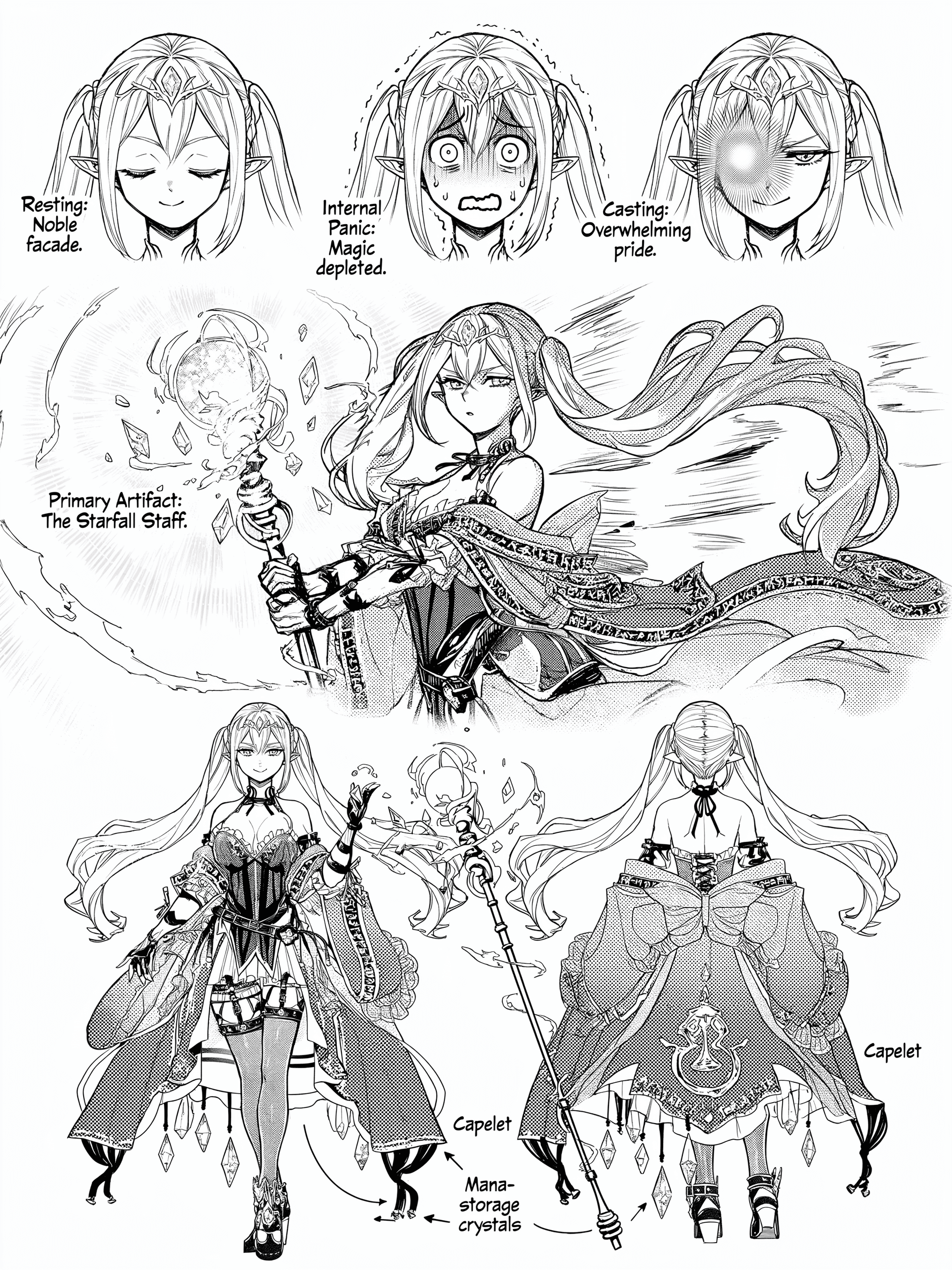

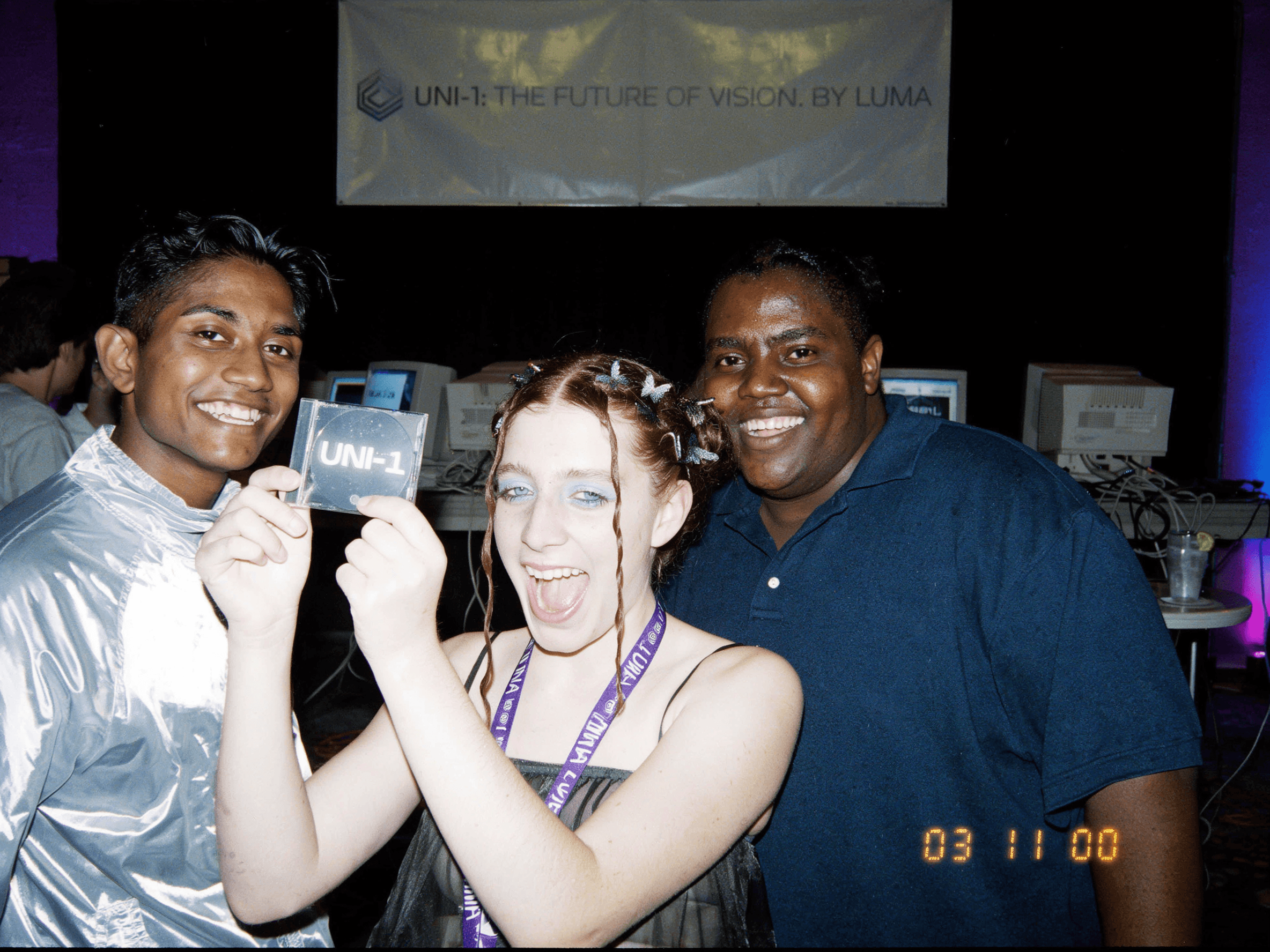

Cultured

Culture-aware visual generation across aesthetics, memes, and manga.

Character Reference

Portrait

Full Body

More examples

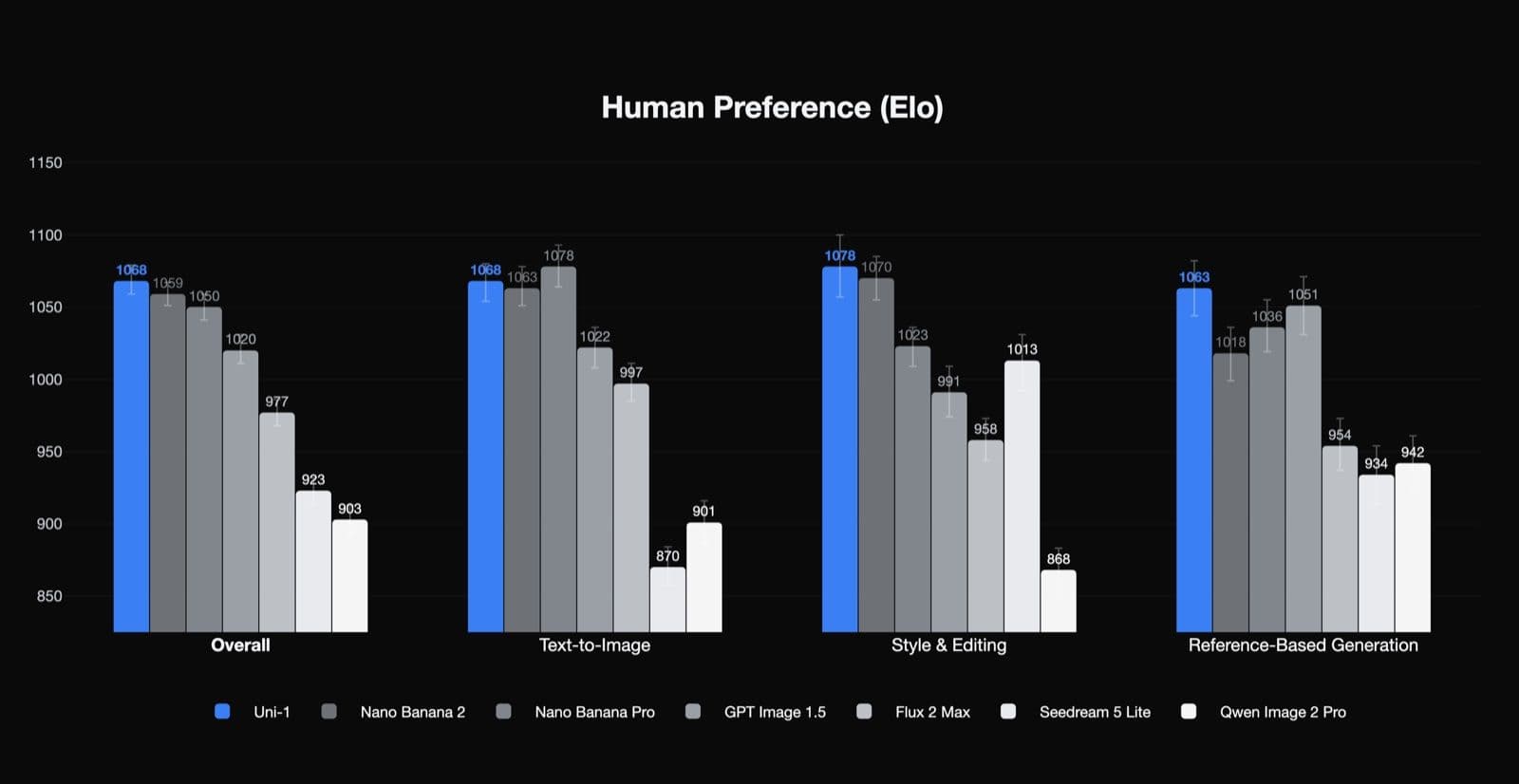

Evaluations

Uni-1 ranks first in human preference Elo for Overall, Style & Editing, and Reference-Based Generation, and second in Text-to-Image.

Image Generation Pricing

Equivalent per-image price*

*Per-image prices based on billing token counts. Each image (input or output) = 2,000 billing tokens at current settings. All prices in USD.

Get API Access

Join the waitlist to get early API access to Uni-1.

We'll notify you when API is available.