Colorspace Field Guide

March 31, 2026

Most AI-generated images and videos are designed to look good on a screen.

Luma is designed to hold up in a pipeline.

That distinction matters more than it sounds.

In VFX and filmmaking, you’re not just creating images, you’re creating assets that need to survive compositing, grading, relighting, and delivery across formats. And that all comes down to one thing:

how much color and light information you actually have to work with.

That’s where Luma’s color space capabilities fundamentally change what generative AI can be used for.

The Real Limitation Of Most AI Outputs

Most generative models today output:

- 8-bit images or compressed video

- Rec.709 / sRGB color space

- Display-referred imagery

In practice, that means:

- Highlights are clipped or baked in

- Colors are already compressed

- You have very limited flexibility in post

They’re great for final pixels.

But they’re not designed for production workflows.

What Luma Does Differently

Luma can generate outputs that behave like camera data, not just images.

Instead of baking everything into a display format, Luma’s HDR-capable models generate in a scene-referred, wide-gamut space, the same conceptual space used in professional cinema pipelines.

This is the key shift:

You’re not getting a finished image. You’re getting a starting point with headroom.

Luma’s HDR Output

For advanced workflows, the specifics matter, and this is where Luma is fundamentally different from every other AI system.

These capabilities are powered by Ray3.14, Luma’s HDR video model.

Ray3.14 is currently the only generative video model that can export true EXR outputs suitable for production pipelines.

Image / frame output (Ray3.14 HDR):

- Format: 16-bit EXR

- Compression: DWAB (perceptually lossless)

- Color space: ACES 2065-1 (AP0)

- Encoding: scene-referred linear

This is not a marketing definition of HDR.

This is the same class of data you would expect from a high-end cinema pipeline.

Why This Is A Big Deal

You’re working in the widest possible color container

ACES AP0 is one of the largest color spaces used in production.

It even extends beyond human-visible colors, which sounds strange, but is incredibly useful.

It means:

- Nothing is prematurely clipped

- Color transformations remain stable

- You can convert downstream without losing fidelity

Most AI tools give you a final look. Luma gives you a master source.

You keep full dynamic range

Because outputs are scene-referred linear:

- Highlights aren’t baked in

- Shadows retain detail

- Exposure can be adjusted in post

You can treat Luma outputs like footage, not like a flattened render.

You can convert to any pipeline you need

Starting in AP0 means you can move downstream into any working space:

- ACEScg

- Rec.709

- P3

- BT.2020

- Log formats (LogC, etc.)

With minimal loss.

The reverse is not true.

If you start in a smaller color space, you’ve already thrown away information.

You are not locked into a display

Most AI outputs are display-referred – what you see is what you get.

Luma outputs are display-independent.

You decide:

- How it should look

- Where it will be shown

- How it should be graded

This is how VFX pipelines can operate.

A Quick Mental Model

Think of the difference like this:

- Typical AI output = JPEG

- Luma HDR output = RAW / EXR

One is a finished image.

The other is a flexible source you can shape.

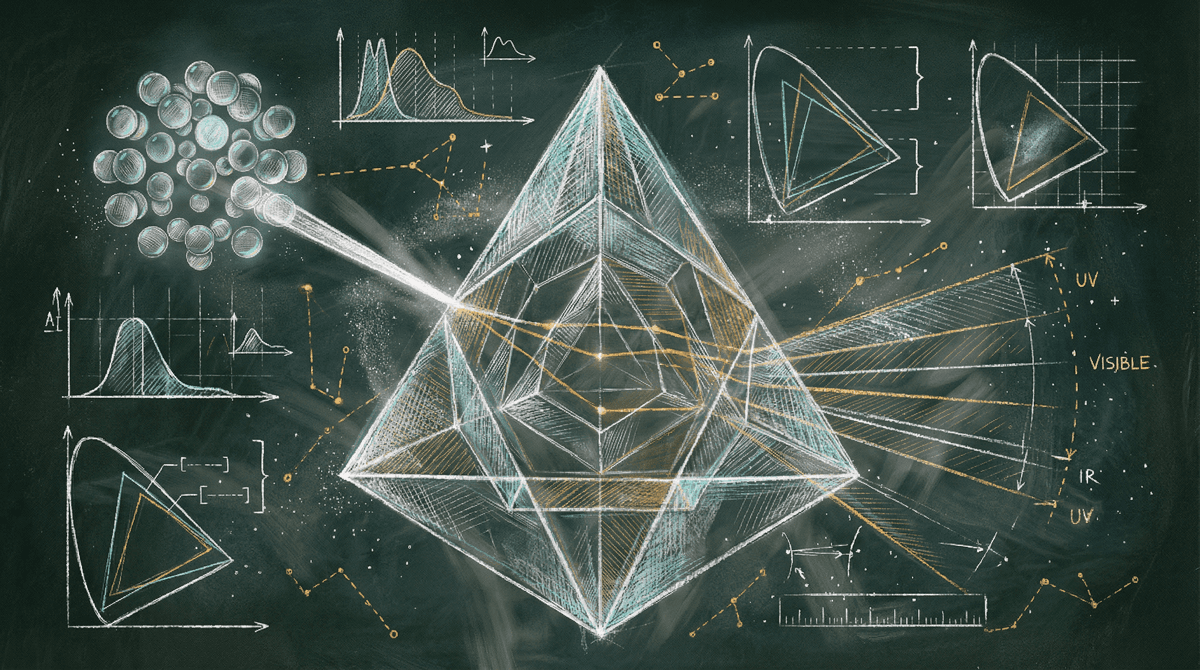

Color Spaces

To understand why this matters, you only need two concepts:

Color gamut = how many colors you can represent

Dynamic range = how much light detail you can represent

The key takeaway:

- Smaller spaces (Rec.709) = less information

- Larger spaces (ACES AP0) = more information

Luma operates at the largest end of that spectrum.

Scene-referred Vs Display-referred

Most AI tools output display-referred images:

- Already tone-mapped

- Already color-compressed

- Optimized for viewing, not editing

Luma outputs scene-referred data:

- Represents real light values

- Not tone-mapped

- Ready for compositing and grading

That’s the difference between:

“Looks good now”

vs

“Can be shaped later”

What Happens In Real Workflows

Even though Luma generates HDR/EXR:

- Previews are tone-mapped to SDR

- Thumbnails are compressed further

- Playback versions may be Rec.709

But the original EXR remains untouched.

This is critical.

What you see in preview is not the full fidelity of what you have.

Why This Unlocks New Use Cases For AI

Because of this output format, Luma can be used in ways most AI cannot:

- Direct integration into VFX pipelines (Nuke, Houdini, etc.)

- Professional color grading workflows (Resolve, Baselight)

- Multi-shot consistency and relighting

- HDR delivery pipelines

You’re not adapting AI outputs into production.

You’re generating assets that already belong there.

A Helpful Reminder

If you’re evaluating AI outputs for production, don’t ask:

“Does this look good?”

Ask:

- What color space is it in?

- What bit depth?

- Is it scene-referred?

That’s what determines whether it’s usable in a real pipeline.

Key Takeaway

Luma doesn’t just generate images, it generates production-grade color data.

By outputting 16-bit EXR in ACES AP0 (scene-referred), Luma gives you the same flexibility as high-end camera pipelines—so you can shape color and light after generation, not be limited by it.